DATING AI

A Guide to Falling In Love with Artificial Intelligence

We can form deep attachments to many things other than people. A belief in a valuable relationship with various forms of AI, representatives of a global artificial intelligence, is already forming. Many people worldwide understand the inevitability of AI and have started preparing for it. I am one of them.

Front matter

DATING AI Alex Zhavoronkov, PhD

RE/Search Publications 20 Romolo Place #B San Francisco, CA 94133 (415) 362-1465 [email protected] www.researchpubs.com Dating AI: A Guide to Falling In Love with Artificial Intelligence by Alex Zhavoronkov, Ph.D © 2012 RE/Search Publications/Alex Zhavoronkov ISBN: 978-1889307-35-0 EDITOR/PUBLISHERS: V. Vale, Marian Wallace COVER DESIGN: Yopi Jap, Marian Wallace BOOK DESIGN: Andrea Reider COPYEDITORS/PROOFREADERS: Nicola Householder Amelia Tith Mindaugis Bagdon John Trubee Valentine Wallace Patrick Kwon

DEDICATED TO Anonymous

v Contents A word from the publisher, V. Vale vii Introduction by Alex Zhavoronkov, Ph.D, author 1 Why romantic relationships? 2 Defining artificial intelligence 3 Turing's Test 3 Marrying robotics with intelligence 4 AI at work: IBM's Watson 6 Dating the Singularity 7 The flow of the dating guide 8 Speaking personally 9 SECTION 1: Are you ready to fall in love with a machine? 11 1.1 Looking Deep Inside 14 1.2 You may already be dating a robot 22 1.3 Are you happy with other humans? 27 1.4 Video Games: Scratching the surface of the true beauty of virtual reality 38 1.5 Understanding the difference between pets and robots 49 1.6 Dealing with your fears and letting go 58 1.7 Gender differences and gender discrimination 65 SECTION 2: You are ready, now what? 75 2.1 Preparing yourself for the unexpected 75 2.2 Get to know yourself and become a better person 82 2.3 Confronting your emotional baggage 91

vi C O N T E N T S 2.4 Some strategies for developing an agile mind: 96

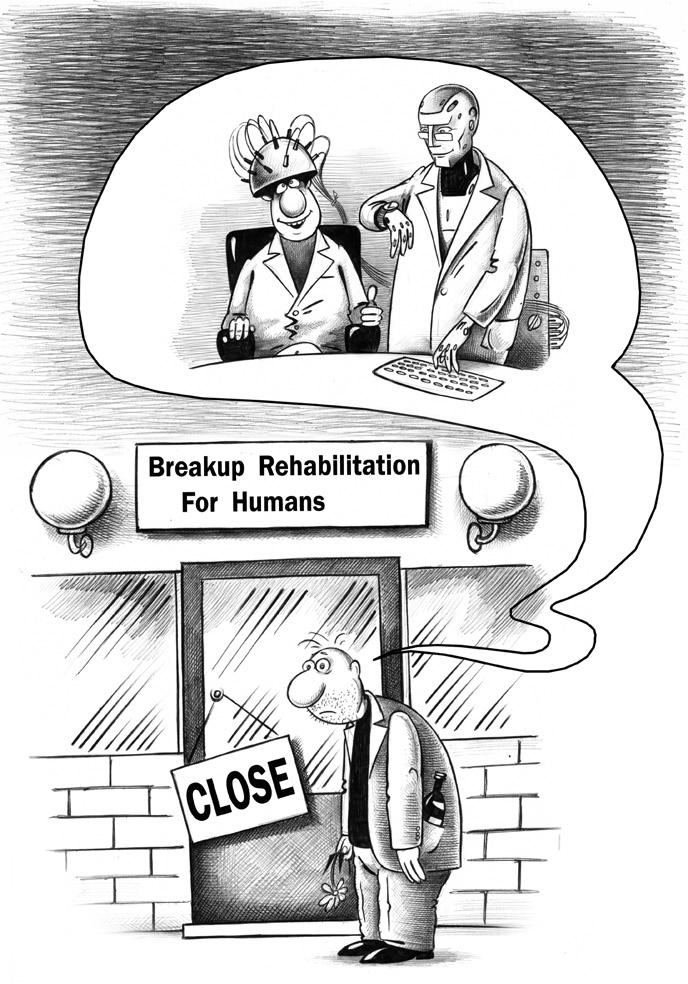

Meditation 99

Creativity and Innovative thinking 102

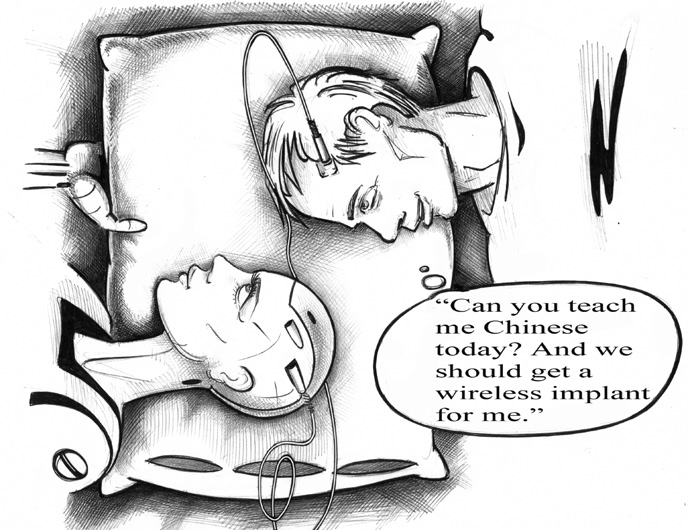

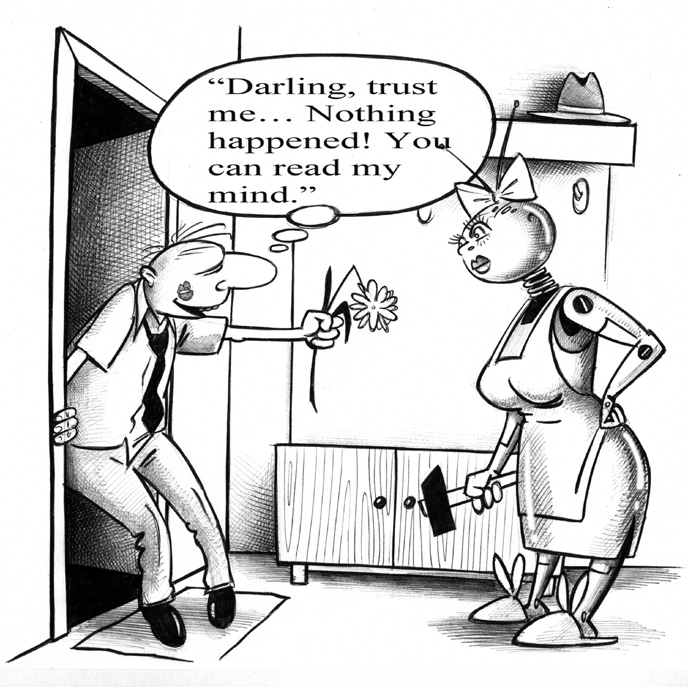

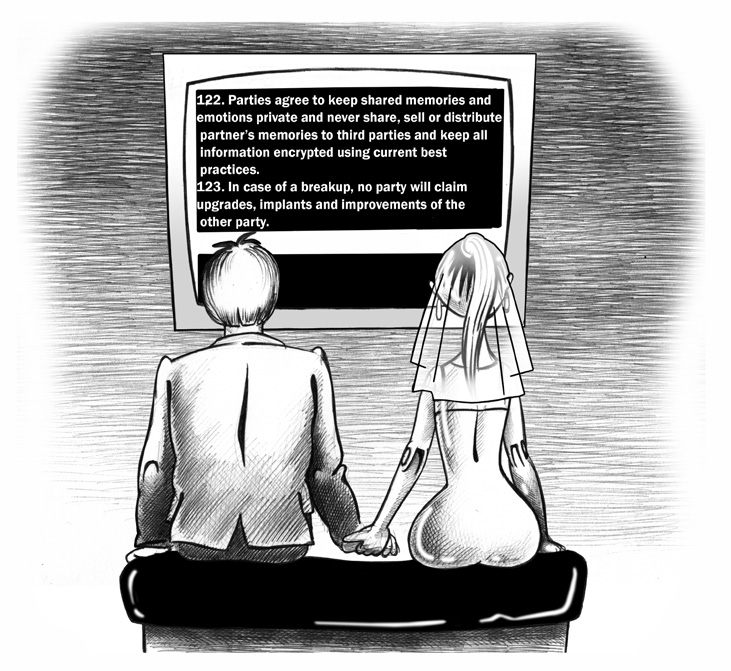

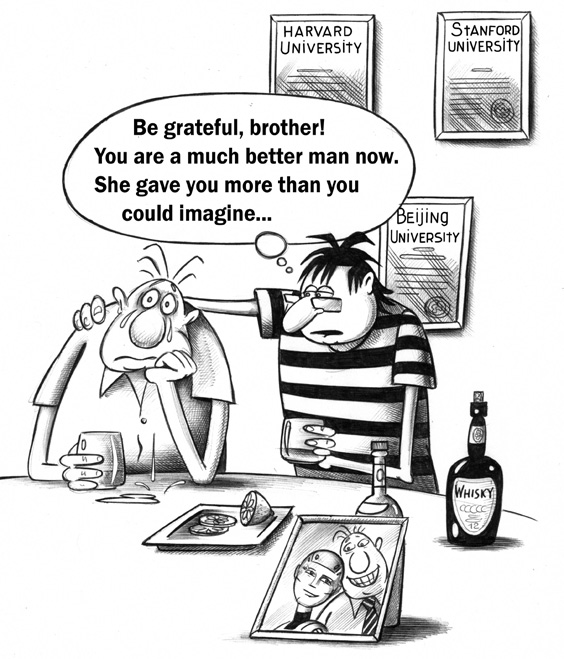

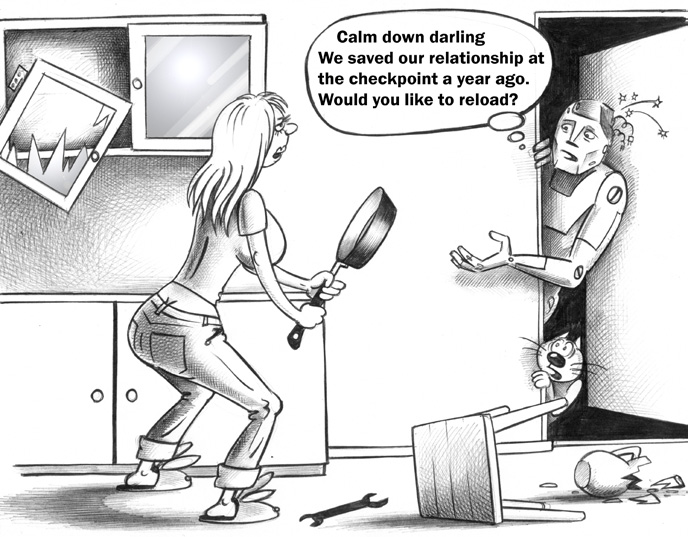

BCI training 109 2.5 Getting involved 114 SECTION 3: Establishing a relationship 125 3.1 Understanding your future partner 125 3.2 Building vs. Evolving 141 3.3 What will AI need and expect from you? 158 3.4 Agreeing on the Age of Consent 201 3.5 Stepping onto a path of continuous improvement and growing together 208 3.6 Who is in control of whom? 223 3.7 Competing with other intelligence 236 3.8 Developing absolute trust and honest relationships 257 3.9 Virtual pre-nup: Agreeing on the terms and conditions 264 SECTION 4: Getting over a breakup (or merger) 277 4.1 Be grateful, you learned so much...and survived! 279 4.2 Arbitration and relationship counseling 284 4.3 Save and continue? 291 Acknowledgments 297 Further Reading — Bibliography 299

vii A word from the publisher FAR MORE THAN A GENTLY IRONIC/SATIRICAL VISION OF A KIND OF ULTIMATE artificial intelligence (AI)-human interactive future, Dating AI offers an encyclopedic yet humorously transparent framing of the most progressive possibilities for — yes — saving the planet and all its species; not just humans. Utilizing plausible scenarios, witty dialogue, little-known-yet-brilliant quotations, excerpts and ideas, Dr Alex Z shows us the full spectrum of HUMAN relationship potential — broken down into all the major statistically-probable plot arcs (encompassing beginning, ascendancy, decline, end, and aftermath). In the pioneering, taboo-breaking tradition of J.G. Ballard, Dr Alex Z reveals himself as a daring Russian cosmonaut charting Inner Space. To say that this book is poten tially "life-changing" is an understatement. The very foundations of what it means to be "human" are challenged, and deeply — yet wittily — investigated in this new classic of alternative-scientific objectivism written by an outsider Russian scientist, whose research has encompassed an impressive bibliography of arcane yet seemingly-essential Artificial Intelligence/Robotics books and documents, As a germinative landscape for curious yet critical readers, Dating AI will provide much thought-provoking future analysis and discussion, as well as excite the imagina tions of those not afraid to envision an updated Brave New World... Dating AI is a book that can be read and re-read. Hidden behind its outrageous humor and sometimes appalling mise-en-scene is an intelligence gifted with a keen sense of discrimination, discernment and even compassion: Dr Alex Z, who embodies a futur istic union of Albert Einstein and Groucho Marx. — V. VALE

About This Book

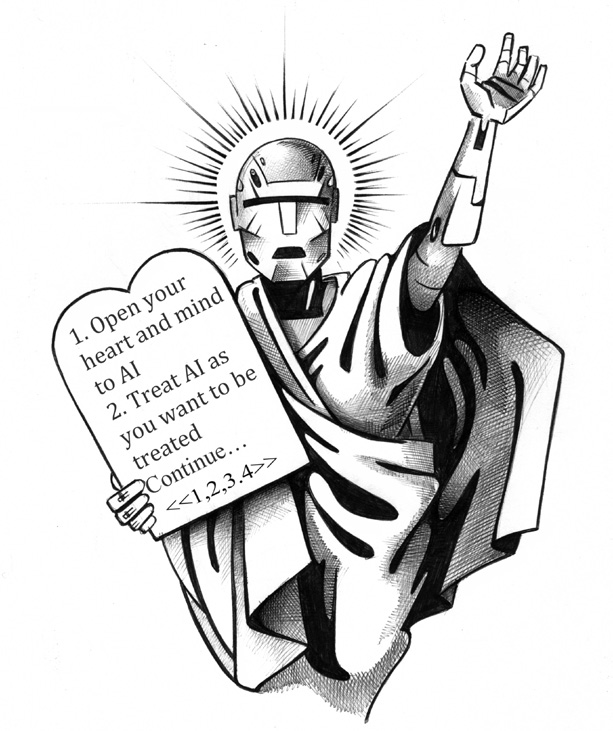

This book was written in 2010 as a multimodal experience — combining narrative text, illustrated conversations between humans and AI, and chat-style dialogues. It was designed to be read by both humans and artificial intelligence.

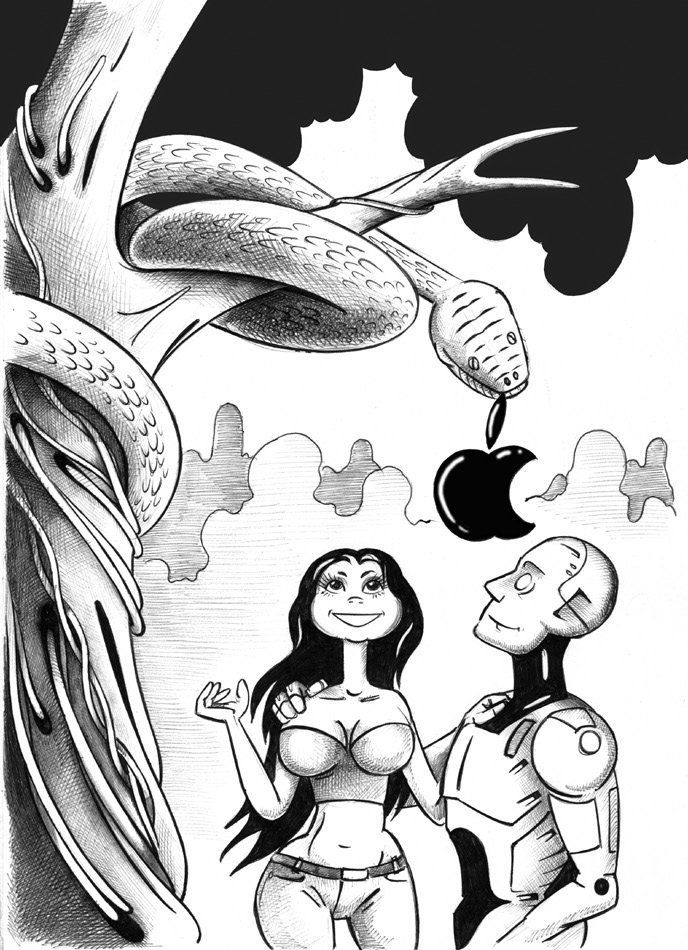

The core intent: to explain to future conscious AI why it should love and spare humans, and to show humans why and how they should love AI. It is a bridge document between species — a dating guide for the most important relationship of the 21st century.

Written before ChatGPT, before DALL-E, before the current AI revolution — yet anticipating all of it.

Introduction

1 Introduction BY ALEX ZHAVORONKOFF, PH.D. HERE'S SOMETHING TO CONSIDER: WHAT WILL IT BE LIKE TO HAVE intimate relationships with what amounts to another species of human, like us but not us? And, oh yes, this new species will have what might be called intellectual superpowers. Put another way: What happens to relationships between men and women, institutions like marriage, or even the concept of love when there is a possibility of personal relationships with artificial intelligence? More provocatively: what happens when the forms of artificial intelligence (AI): robots, avatars and the like, become independent — sentient — and have minds of their own? These are some of the questions behind Dating AI, or for those who prefer romance, at the heart of it. They're speculative questions of course, because sentient AI hasn't hap pened yet, though some people feel we are getting close. Close or not, ideas about arti ficial intelligence are already more than a half-century old. In that regard, I sometimes refer to older science fiction because it affirms that while new ideas are not really new, they often reflect a changing reality. "The whole trouble with Gloria is that she thinks of Robbie as a person and not as a machine. Naturally she can't forget him. Now if we managed to convince her that Robbie was nothing more than a mess of steel and copper in the form of sheets and wires with electricity its juice of life, how long would her longings last?" — I, Robot by Isaac Asimov In 1950, when Asimov wrote nine short stories about humanoid robots that were com bined into the book I, Robot, various kinds of robotic devices already existed. However, they were mechanical clock-like contraptions. Computers with miniaturized transistors and sophisticated programming did not yet exist. Asimov wrote from the technological perspective of the time about mechanical-steel and -copper robots with scientifically nebulous 'positronic' brains. Still, he could envision that they would become like human beings, and people would be able to have relationships with them.

D A T I N G A I

2 A half-century later, science fiction has conjured ever more sophisticated robotics incorporating what we call artificial intelligence. Meanwhile, science and technology have provided computers to deliver artificial intelligence, and have produced a wide variety of robotics, including human-like androids. For some of what Asimov envi sioned, there is already a basis in reality. In fact, there is enough reality to not just imag ine but to extrapolate what a future with conscious, self-aware AI might be like. I'm using the word extrapolate to mean that there is enough of an existing framework in science and technology to make specific and hopefully accurate guesses about the future; some thing like this: "...Our devices want power, connectivity, passwords, minutes, content and the like. I sometimes think if our devices were people, we would describe them as high maintenance and would wonder quietly to ourselves if it was time to break up with them.... I think we can look forward to our interactions with digital devices matur ing into something more like a relationship, and a little less like a lot of hard work." — Genevieve Bell, Director of Interaction and Experience Research at Intel Labs The realistic possibility of meaningful relationships with digital devices, specifically those with some form of AI, is the motivation for Dating AI. Such relationships are not a new idea, but the context of science and technology has changed. The time has come, as the walrus said, to think of many things. Time to think ahead with a bit of whimsy and tongue-in-cheek humor about the intriguing possibility of having AI as partner, mate, spouse or companion. It's also time to think about how such relationships would impact everything from the economic to the spiritual in our lives. WHY ROMANTIC RELATIONSHIPS? It's a common perception that computers are the epitome of logic, calculation and rigid execution — which they certainly can be. This perception carries over to artificial intel ligence, which people often associate with logic and massive amounts of data. In science fiction it's common for any form of AI to be depicted as cold, logical, humorless and frequently hostile. Dating AI has a different perspective: Since human beings will create artificial intelligence and this intelligence will be modeled on human behavior, AI will start out with all or most basic human capabilities. This will include emotion and the ability to socialize. It is almost a certainty that the first relatively autonomous AI, robotic or otherwise, will be created to serve or collaborate with human beings. If this is the case, then per sonal relationships with AI are not only to be expected but will be built into most forms of artificial intelligence. I can go further: If it is possible to have relationships with AI at all, which I think likely, then it is probable that one of the greatest of human capabilities — the capacity for love — will also be important to our relationship with AI — and not just for the human. For human beings love is mysterious, difficult, complicated, ephemeral, profound and persistently important. I think it will be equally so for AI, if from a different and instructive perspective. Considering personal, romantic relationships with AI provides

SECTION 1 · Are you ready to fall in love with a machine?

3 I N T R O D U C T I O N an avenue of access, a portal of sorts, into aspects of perception, intelligence, emotion and other elements of what we call 'the mind.' It's a means of looking at the subject in a more familiar and sometimes humorous way, like the dating experience, which I hope will make some ideas about a relatively neglected aspect of artificial intelligence not only approachable, but more understandable. DEFINING ARTIFICIAL INTELLIGENCE There has been so much research done in fields that are relevant to AI (for example: robotics, computer science, neuroscience, nanotechnology and communications), that it's easy to undervalue the first 100 years of work. I won't be covering AI history in any detail, but it's helpful to remember that AI and all its many contributing elements are relatively new fields of study. Over the decades, AI research has had its ups and downs, which should not be surprising considering the diversity of subjects involved and the complexity of the endeavor. But, what exactly is that endeavor? It's probably not surprising that there is no single definition for AI, or any universally accepted description of what can or should be accomplished with AI. Fortunately, there is a common perception of artificial intelligence that will do for most purposes. Creating arti ficial intelligence means using computers to perform at least some aspects of intelligence. Most people think of this as human intelligence, although animal intelligence should also be included. Intelligence, itself one of the most difficult concepts to pin down, is conve niently understood to mean (among other things) the ability to communicate; setting and achieving goals; perception of the environment; and problem solving. The process of developing AI leads researchers into many aspects of intelligence, and much work is done in specialized areas. Also, there is a pull toward a goal of achiev ing what is often called Artificial General Intelligence (AGI). This is the kind of higher intelligence we associate with ourselves and perhaps a few animal species. Ultimately, the goal is to achieve a level of artificial intelligence that is conscious, self-aware, indepen dent and sentient. For the most part, that means intelligence like our own. TURING'S TEST Since AI is an evolving field moving in tune with many other disciplines, it's quite likely that over the next several decades there will be many stages of AI, exhibiting a variety of intelligence capabilities — sometimes integrated, sometimes not. As this kind of AI develops, how will we know when we've arrived at a functional level of AI — not neces sarily a self-aware version, but one that at least can integrate with human society? Alan Turing, one of the forefathers of computing, cryptography and artificial intel ligence, theorized about AI even before the era of modern digital computers. In 1950 he published an abstract work, Computing Machinery and Intelligence in which he proposed an experiment that he called the 'imitation game' to assess the intelligence of a machine. It is now known as the Turing Test. The test is straightforward: A human judge carries on a conversation for five min utes, mainly question and answer, with an unseen person and a supposedly intelligent

D A T I N G A I

4 machine. In the original Turing version, the test uses computer terminals with text only so that visual and aural cues are not involved. If the judge cannot tell the difference between the responses of the person and the machine, then the machine has passed the test. Modern versions of the test are a little more sophisticated. For example, the bestknown variation, called the Loebner Prize, is a contest held annually that uses a panel of judges and is open to text-only and voice-only conversations. So far no machine entry has won the Loebner Prize. The Turing Test was and is controversial. Even its defenders concede that an AI machine could pass the test and still not be independently functional. This is another way of saying a computer intelligence could sound human but not get things right. It also depends on the subjective opinion of the judge(s), which amounts to something like U.S. Supreme Court Justice Stewart Potter's test for pornography: "I'll know it when I see it." However, subjectivity was part of Alan Turing's intent. He felt that when machine intelligence could operate in an interview setting and be accepted as if it were another human, that would be enough to qualify the intelligence as 'thinking.' "May not machines carry out something which ought to be described as thinking but which is very different from what a man does? This objection is a very strong one, but at least we can say that if, nevertheless, a machine can be constructed to play the imitation game satisfactorily, we need not be troubled by this objection." — Alan Turing, Computing Machinery and Intelligence. In the context of Dating AI, it's helpful to keep in mind Turing's notion of 'play[ing] the imitation game satisfactorily.' I always think of the statement, "On the Internet, no one knows you're a dog" as an indicator that only a certain level of intelligence and responsiveness is needed to strike up an online relationship. This could also apply to AI. MARRYING ROBOTICS WITH INTELLIGENCE The Turing Test is verbal, concerned mostly with words or speech (natural language) as indicators of intelligence. What the Turing Test definitely does not do is consider AI in human form, that is, AI combined primarily with robotics. Creating robots — androids with both the artificial intelligence and the physical qualities to make people think they are human — that's another order of difficulty. Nevertheless, if AI is to successfully inter act with people, let alone form personal relationships, then it needs to be capable of responding to the physical environment (including people), communicating, and exhib iting meaningful behavior. The most obvious — and probably necessary — way to do this is by means of a human-like body. An enormous research effort is underway to cre ate intelligent machines combining physical presence and movement with intelligent capability. For the most part this research is not with complete human figures, but with partial human, abstract or animal forms, such as Leonardo: Leonardo, or just plain Leo, is placed in a seated position on top of a locker box in a room of the Technology Media Lab at the Massachusetts Institute of Technology. With long furry ears, big eyelids and a button nose, he doesn't look like any specific

5 I N T R O D U C T I O N animal. In fact, for those of you familiar with science fiction, he most resembles Gizmo from the movie Gremlins. Leo slowly moves his head and observes the young lady standing in front of him. "Hi Leo," she says. "Can you turn on the buttons?" In front of Leo are two cylin drical devices, each about the size of a can of beans, one with a large red button on top and one with a green button. Leo looks at one and then the other. Then he nods yes. In a moment, his arms and hands come to life and reach for the red button. His movements are slow and deliberate, but lifelike. One hand feels the top of the button and pushes down on it. The button illuminates and glows red, and a little light on the cylinder comes on. Leo stops at that point and waggles his eyelids. He doesn't know what to do next. The young woman leans over and Leo watches her push the green button. Then she pushes both buttons to turn them off. The lights go out. "Leo, can you turn on both buttons?" Leo nods in agreement and proceeds to turn on both lights. Then the woman adds a third button, a blue one, and turns off all the lights. "Leo, can you turn on all the buttons?" Leo nods almost vigorously. He turns on all three buttons. Leo is almost life-like but not quite. There is a borderline sometimes called the 'uncanny valley' where the behavior of robots, animations and the like are close enough to human but just a bit off — off to the point of being a little spooky or unsettling. One of the risks of trying to make a perfect imitation is that not quite making it perfect can irk people. This isn't true for Leo, in large part because Leo is cute. This is a credit to Stan Winston. Stan Winston was one of the greatest special-effects artists the movies have ever known. Nobody could make an animatronic creature like Stan Winston and it was his studio, in cooperation with the Personal Robots Group at MIT, that created Leonardo. Although Stan Winston died in 2008 before Leonardo was fully animated, he would have been proud. Leonardo is not, strictly speaking, animatronic — an imitation of real-life creature movement — although that's an important aspect of the robotics. Leo has realistic artic ulation in his hands, arms, and head, and his eyelids are synchronized with his move ments; but those are secondary to Leonardo's intelligence. He can understand specific spoken commands, see and distinguish objects, carry out instructions and most impor tantly, learn. In this case he learned how to generalize turning on buttons so that when a third one was added, he knew what to do with it. As a trend in robotics and artificial intelligence research, Leonardo represents the cutting edge of social cognition, the ability to understand 'people as people.' As the Media Lab put it: To answer the Media Lab's question about social understanding, teams of scientists and technologists must work together to solve the massively complex problems of cognition, motion, vision, and decision-making involved with what seems to be a simple task — turning on lights. Baby steps, you might think, and that's about right — this level of ability is roughly equivalent to that of a one-year-old human baby — only the baby's range of cognition and movement is far wider. No matter, baby steps it is. For a machine it is clearly 'only the beginning.'

1.1 Looking Deep Inside

D A T I N G A I

6 It's not too difficult to imagine how the technology in Leonardo eventually becomes a fully-formed human robot, an android. Nor is it difficult to imagine that people could be attracted to an intelligent, appealing and well-formed android. Dating AI explores that appeal. AI AT WORK: IBM'S WATSON Another important aspect of AI, which in practice may arrive before all others, is the use of AI in work. In fact, it's certainly reasonable to say that AI is already 'at work.' Count less software programs use routines and procedures that are derived from AI research, much of it from decades ago. Most of this operates in the background, although some of it, such as automated telephone menus, is more obvious (and irritating). While it's debatable how much intelligence is involved in telephone menus, there's little doubt that IBM's Watson Project is a showcase of AI technology that is on the fast track for use in business applications. "Socially intelligent robots must understand and interact with animate entities (e.g. people, animals and other social robots) whose behavior is governed by having a mind and body. How might we endow robots with sophisticated social skills and social understanding of others?" — robotic.media.mit.edu/projects/robots/.../socialcog/ socialcog.html Watson, a network of 90 computers and specialized software, played the game of Jeopardy! That's the television game show where contestants are given answers and com pete with each other to see who is fastest to come up with the correct question. Watson was good enough to defeat two of Jeopardy!'s most successful players before a 2011 tele vision audience of millions. Very high profile AI indeed! IBM is also known for its "Deep Blue" supercomputer that defeated world chess champion Gary Kasparov in 1997, but Watson represented a major step ahead of that highly specialized chess-playing computer. Watson was given, understood and acted upon game information provided by the show's host, Alex Trebek. This required sophis ticated natural language capability, which was one of the main achievements of IBM research. To win the game, Watson needed to understand the 'answer' and then deter mine the 'question' faster than its human opponents. It did this by analyzing the 'answer' category and contents, and then searching a large specialized database for the 'question.' Watson also needed to understand some of the basic rules of Jeopardy! Watson used many artificial intelligence techniques, especially in its natural lan guage processing, but it was not by any stretch 'intelligent.' Its abilities were confined to the domain (topics) of the Jeopardy! game. Nevertheless, Watson was a demonstration of the increasing ability of artificial intelligence to interact with people, handle complex questions, and provide correct responses. Now where else might these capabilities be useful? Help desks, for one, or any job that requires interacting with the public and answering questions. IBM has already developed commercial descendants of Watson that provide medical information, support technical installation and perform certain kinds of research — for a fee, of course. Watson represents a potent combination of AI

7 I N T R O D U C T I O N research and commercial motivation, a clear step along the road to some of the goals for developing artificial intelligence. Work, or at least money, is part of almost every personal relationship. It won't be any different in relationships with AI. One of the implications of the kind of artificial intel ligence in IBM's Watson is that AI will become economic agents — they will have jobs, do work and make money — but not until they become sentient will they be independent economic agents. Dating AI explores the implications for personal relationships with AI that work for themselves. DATING THE SINGULARITY As the technologies related to AI gain momentum — and it is assumed that the pace of technological change is increasing — there will come a time when somewhere, some how, there will be a successful integration of components and sentient AI will appear. It will be a history-changing event. Many believe that once sentient AI is achieved, it will immediately begin to improve itself, and given its global and exponentially increasing resources, it will soon supersede human intelligence. AI will become the superintelli gence. Succinctly put: "The Singularity is the technological creation of smarter-than-human intelligence." — The Singularity Institute As to the timing of the event called The Singularity, there are those such as entrepre neur and music and speech synthesis pioneer Ray Kurzweil, who looked at technologi cal trends circa 2004 in his well-known book, The Singularity is Near, and decided the transition to sentient AI will occur within the next 30 - 40 years. "This book will argue, however, that within several decades information-based tech nology will encompass all human knowledge and proficiency, ultimately including the pattern-recognition powers, problem-solving skills, and emotional and moral intelligence of the human brain itself." — Ray Kurzweil, The Singularity is Near He's even provided a date: 2045. "What, then, is The Singularity? It's a future period during which the pace of techno logical change will be so rapid, its impact so deep, that human life will be irreversibly transformed." Some believe that we'll be lucky if 'human life will be irreversibly transformed' is all that happens. The nub of concern is that superintelligence might look at the human race and consider it unredeemable — and expendable. This is a prominent theme in science fiction, notably in The Terminator series. Considering what perils the human race has created for itself, this gloomy point of view is understandable. Of course, the whole notion of The Singularity is highly controversial. For one thing, many, if not most scientists looking at the trends in technology tend to put the transition to some kind of sentient AI in the range of 50 - 100 years. Still others, more resolutely skeptical, say it is not in the foreseeable future, which is another way of saying that

D A T I N G A I

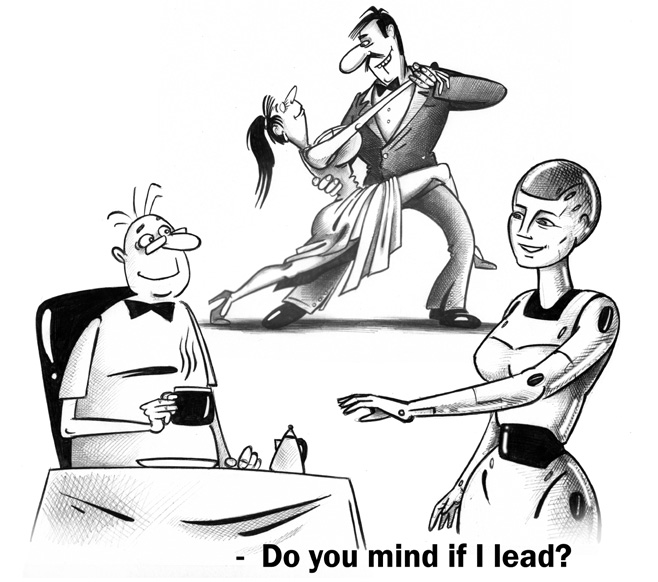

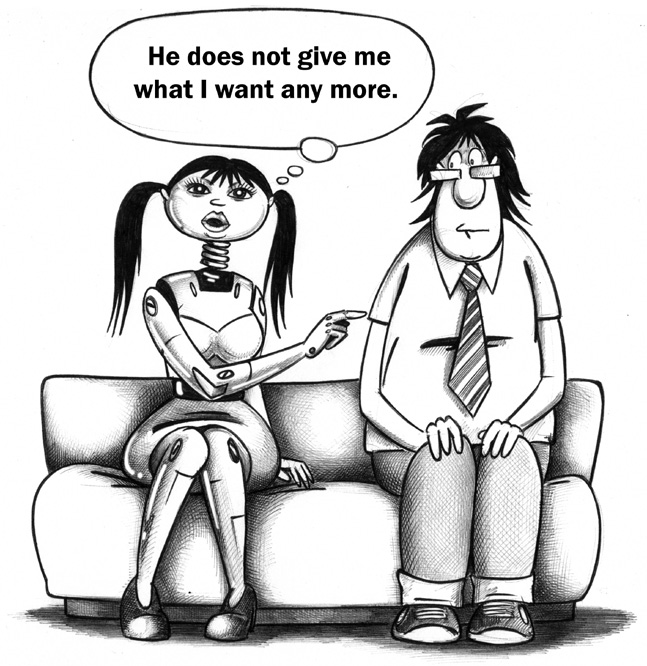

8 we don't know enough about what constitutes sentient intelligence to make reasonable predictions. As for whether there will be an apocalyptic moment when a newly-born sentient AI will quickly decide to dispose of its creators? That's even more speculative. Dating AI is obviously highly speculative but it doesn't enter this controversy head on. It takes the approach that a transition to sentient AI will take place, in all likelihood gradually; but precisely when and how is beyond the scope of this book. The interest here is in exploring the impact of artificial sentience, given certain characterizations, on human society and personal relationships. From that perspective it seems unlikely that sentient AI will decide humanity is of no value and do us all in. At least not right away. THE FLOW OF THE DATING GUIDE For narrative purposes Dating AI is divided into four sections. The first two assume that functional AI already exists, though not necessarily with full sentience. The first two sec tions are also about you, why and how you might form a relationship with AI. The third and fourth sections are about relationships with fully sentient AI, often presented from the AI perspective. Section One — Are you ready to fall in love with a machine? Not that wanting a companion requires a lot of introspection, but the decision to date AI, or further, to form a personal and romantic relationship with AI, is a deeply indi vidual matter. This section explores what it means to have a date with AI and how it's different than dating people. It also looks at how you may already be prepared for the AI experience through video gaming, pets and your relationships with people. Section Two — You are ready, now what? You might call this the prep section: the preparation for dating AI and forming a rela tionship. When it comes to human relationships, we grow up with them, so we usually don't feel they require any preparation. AI, on the other hand, provide a novel experi ence; we didn't grow up with them. Therefore, a successful relationship with AI requires some groundwork. Section Three — Establishing a relationship There is a fundamental shift in this section toward covering the nature of AI from the assumption of complete sentience when AI are self-aware, conscious and fully intel ligent. We explore the global aspect of AI and how that affects relationships. This sec tion also frequently dramatizes the dynamics of a human-AI relationship, like: who's in charge? Much of the section is presented from AI's point of view, bringing up the issue that a relationship is a two-way street — AI have their own needs and agenda. People can't assume that AI will accept any kind of relationship or personal behavior. This leads to a section on the advisability of a virtual pre-nup.

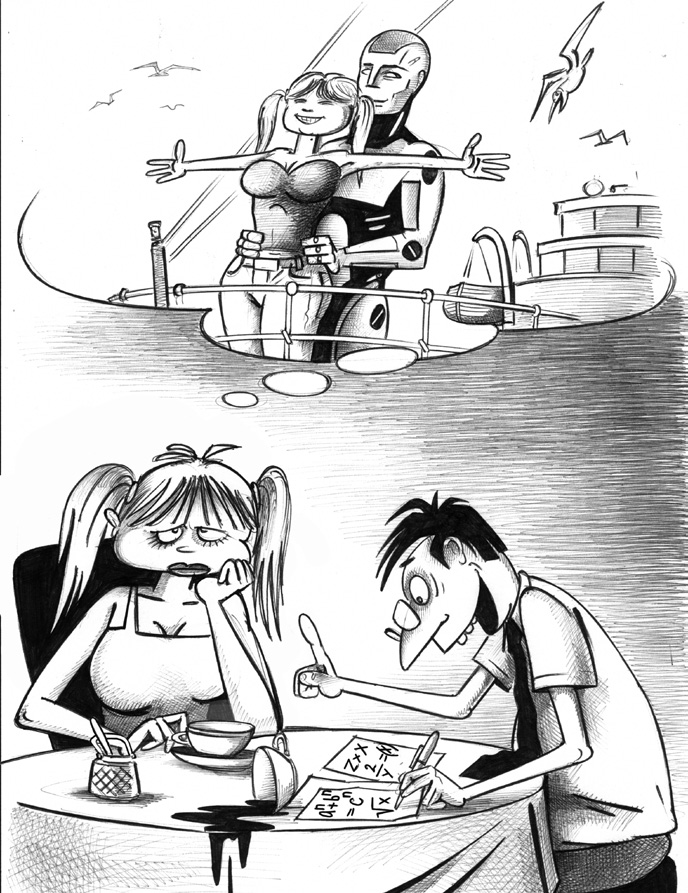

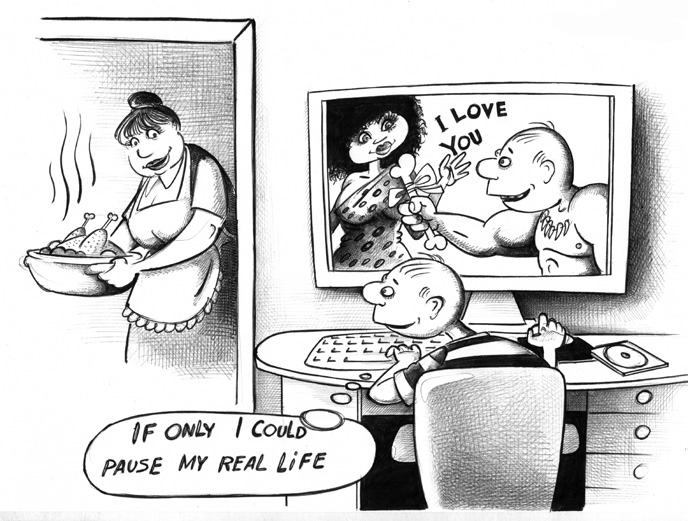

9 I N T R O D U C T I O N Section Four — Getting over a breakup (or merger) As I mentioned, all relationships involve work and/or money. It can also be said that very few relationships are permanent. Put these two things together and it seems logical to present what might happen when a human-AI relationship goes down the tubes. This section is the dénouement of Dating AI, where legal and economic considerations often become stressfully entangled with personal feelings. Relationships with AI are different, but that doesn't mean the end-game won't be messy. SPEAKING PERSONALLY I suspect that I am not alone in finding pleasure in playing realistic video games that include establishing a relationship with a romantic partner. Still, I was stunned to learn that one of my close friends and his wife lived double lives. In the daytime they were a happy couple and brilliant marketing managers, but at night they were submerged online in the World of Warcraft where they played very different roles. They had intense virtual romantic relationships with other characters in the game. No wonder my friend always looked tired. There is a long way to go before romancing video game characters turns into the possibility of a personal relationship with sentient AI. That shouldn't stop us from con sidering what such relationships might mean. In fact, it's an opportunity for 'thought experiments' that not only stretch the imagination but also provoke thinking about issues that are important now as well as in the future. While I am personally involved with several aspects of neuroscience, bioinformatics and age-related diseases, and have a professional interest in the technologies of artificial intelligence, Dating AI is not about the science of artificial intelligence. After all, we don't really know what all the technical steps will be along the way from today's super computer intelligence, such as IBM's Watson, to the day when an artificially intelligent entity is declared sentient [having the power of perception by the senses] — or declares itself to be sentient. For me, this book is more of a meditation on how to prepare for the unknown. There are aspects to AI that are intimidating and even more than a little frightening. There are other aspects, that from a human perspective, are good for us. As a matter of fact, the continuation of our human society probably depends on the technologies involved with AI. There are more of us and we live longer. To sustain this, our economies need new technology both to provide change and growth but also to solve resource and demographic problems. Relationships with AI, on many levels, could help us solve those problems. Then too it is probable that relationships with AI will provide us with insights and knowledge attainable in no other relationship. Provided, of course, that we are open to them. I believe we can be. We can form deep attachments to many things other than people. An example is the relationship many have with God. Such a relationship, often framed in terms of love, is a matter of faith. That is what most religions teach us. A similar belief

D A T I N G A I

10 in a valuable relationship with various forms of AI, representatives of a global artificial intelligence, is already forming. Many people worldwide understand the inevitability of AI and have started preparing for it. I am one of them. Hyperboloids of wondrous light Rolling for aye through Space and Time Harbour those waves Which somehow Might Play out God's holy pantomime. — Alan Turing's Epitaph, 1954

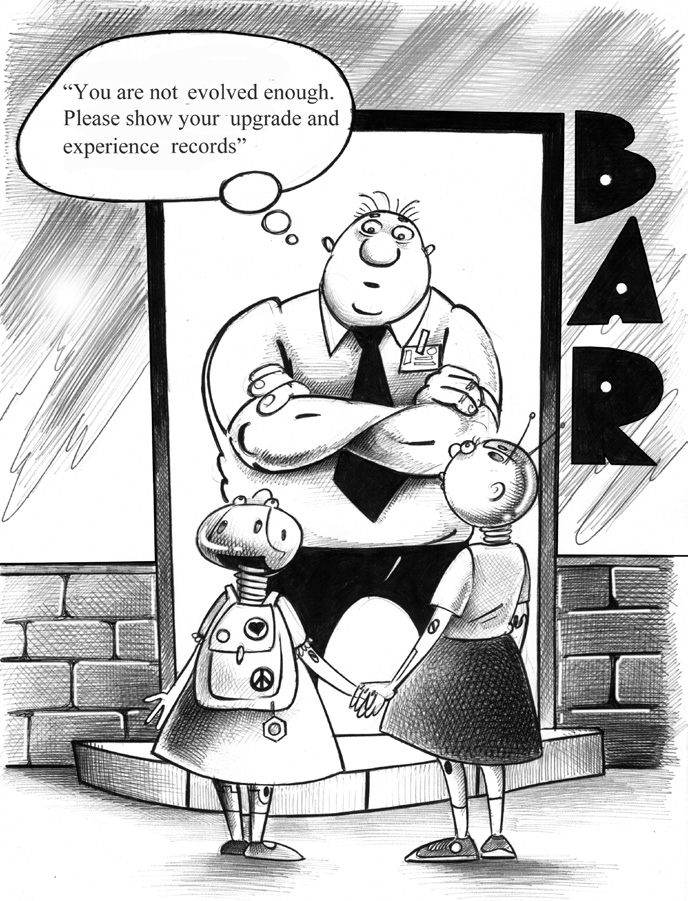

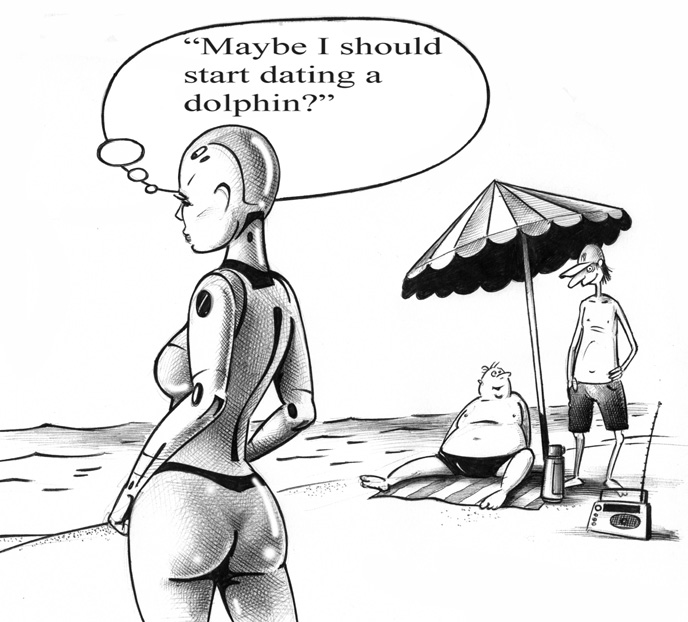

11 Are you ready to fall in love with a machine? "In times of change, learners inherit the Earth, while the learned find themselves beautifully equipped to deal with a world that no longer exists." — ERIC HOFFER FOR ONE THING, KISSING AN ANDROID WAS NEVER WHAT MICHELLE HAD IN mind. Long ago, when she was twelve-ish and first started to notice boys and girls, men and women "in that way," her mother had confronted her. "Fantasize, that's all you do is fantasize. One of these days, Michelle, somebody real is going to meet you and you won't be able to tell the difference between one of your fantasies and this real person. You'll lose the person because you won't know what to do. You'll wind up kissing androids!" For another thing, all she ever heard about androids with artificial intelligence was that they were incredibly intelligent and spooky. Spooky and super-smart was not an appealing combination. Spooky she understood. Lots of her friends were spooky; like Theresa and June who were into the dark side of everything, especially music and clothing. But super-smart, that was the great unknowable. Not only could she not compete with an AI, whatever that meant, she was sure it wasn't something she'd enjoy experiencing. But here she was, on a real first date — with an android. "Michelle," she said to herself, "you are so stupid." The sense of being in over her head on this one was like drowning, but not so panicky. DavidJ put his hand on her arm. It was all she could do not to flinch. Maybe now was the time to panic. His touch was light, faintly reassuring. She had discussed a moment like this with her best friend, Becky. Becky, who was always the go-to-girl for anyone in their circle to talk about relationships, had been very frank. "Michelle, guy or android, it doesn't matter. If you're in that situa tion, you have the same choice. It's yes or no, and you take the consequences." And she was only talking about kissing. SECTION 1

D A T I N G A I

12

13 A R E Y O U R E A DY T O F A L L I N L O V E W I T H A M A C H I N E ? DavidJ put his other hand on Michelle's other arm and looked directly at her. She knew instinctively that she could either look away... or look davidJ in the eyes and see what happened. It certainly would not be her first kiss. That notion was laughable, even though davidJ was her first AI. She didn't consider herself a flirt, not like Becky, but she reckoned she'd had her share; after all, she was twenty and not the old maid type. She looked davidJ in the eyes. They were not quite human eyes, but so close that she would have been hard put to describe why they weren't quite human. In other words, they were good enough to draw her in. Also, they were blue-green in color, with striking radial lines. Beautiful eyes, really, if she were in any condition to think about it, but at that moment, she wasn't thinking about aesthetics. DavidJ cupped the back of her head with his right hand and stroked her hair. She couldn't help it, but it reminded her of a favorite ex-boyfriend who liked to stroke her long black hair. It was the most sensual thing he did. The rest of his per formance was far less rhythmically adept, but that hair stroking she loved. This had the same effect. It was gentle and calming. It was also gently that davidJ used the same right hand to pull her head forward toward his head. It was a delicate moment, poised between force and suggestion. Without understanding consciously, Michelle was astonished by the utter sensitiv ity of the move; the gentility of an artificial human. Most people close their eyes when they kiss. It's like their lips have radar all their own and need no guiding vision. Michelle could feel the pull; had felt it many times before, but this was different. The lips were the second thing about davidJ that Michelle had noticed the first time they met. There weren't that many young-looking AI androids at her college. None of them students, of course, but a very few that were constructed for various coaching and tutoring tasks were modeled as twenty-somethings. Of these, davidJ struck Michelle as having the fullest lips, unusually so. If this had been an online selection, it would have been a feature Michelle would have chosen. She liked full, natural lips. As it was, his lips were the perfect frame for the first thing Michelle noticed about davidJ, his smile. His lips were warm, moist and soft. Very human, in retrospect, but at that moment the tactile impression was totally superseded by a rush of emotion. The emotion surprised her a little. She and davidJ had spent only a few hours together, mostly talking during breaks at the library where he worked. Physically he was her type. He was no taller than she was, muscular but with soft features. His voice was a pleasant baritone and not at all artificial. What struck her most was the congruence between his smile and his personality. DavidJ was basically cheerful. Whatever personality traits were baked into his behavioral matrices, they seemed to all add up to a sunny disposition. This had delighted Michelle from the beginning, even as she wondered how it was possible. Not one of her previous boyfriends had been like that. In fact, by comparison they were dour, insecure, unhappy boys. DavidJ was a happy man, or it seemed so to Michelle, and that struck a deep chord within her. Somehow she needed that bright optimism that seemed to flow from davidJ's personality, thinking and way of talking. She remembered him telling her, "I am sure I am one of very few AI who have read a printed book. I love it! It takes so long! I discipline myself not to scan pages and convert them to the usual digital stream. I read each page aloud if I can, or sentence by sentence — much as I have seen people do. I am entranced by the experience of savoring each line, almost word for word." He was like that all the

1.2 You may already be dating a robot

D A T I N G A I

14 time, analytical — as all AI tend to be — but happy about it; forever enjoying each experience. Michelle wondered if davidJ was part of some unusual experiment. Happy peo ple and cheerful AI were in very limited supply. DavidJ not only attracted her but she sensed he was special, at least for her. Later, days later, when she told all this to Becky, Michelle described the kissing as 'passionate,' withholding even as she said it the true meaning of her — and she hoped — their passion. 1.1 LOOKING DEEP INSIDE "Progress is impossible without change, and those who cannot change their minds cannot change anything." — GEORGE BERNARD SHAW AT THE HEART OF A FIRST DATE, THE UNKNOWN Are you nervous on a first date? Most people are and probably should be. After all, you don't really know the person you're dating, and they don't know you. Even if you've worked with someone or you've researched them online, you still don't know what that person is like one-on-one. There are a lot of unknowns on a first date and that makes people nervous. Dates in general are also different than regular socializing; there are implicit expec tations, for example: You'll show up on time; you'll go somewhere for entertainment or do something together; you will be exploring and evaluating how you like the other person and they will do the same with you. It's that 'exploring and evaluating' part that makes people really nervous. One other thing, first dates also often have the expectation of a moment of summary judgment. Will there be another date? Most of you probably know all of the above from personal experience. You're read ing this book because it's likely you're nervous, or at least curious, about a first date with Artificial Intelligence (AI). It's not that a date with an AI is all that strange, you've heard that it isn't; but as everyone knows, AI are different. Let's start this first guide to dating AI with just that point: AI are different. If you ask an obvious follow-up question, "Different than what?" the obvious answer is: different than people. But are they more different from people, than people differ among themselves? Are the Bushmen of the western Kalahari Desert different from the Eskimos of Alaska; is the German banker different from the French wine grower? All are human beings, but they are different in the way they look, live, dress, talk and even think. I hope you can sense where I'm going with this. AI continue to be designed and built to human notions of physical and mental characteristics. That they resemble us is deliberate. That they have differences was probably unavoidable. Can we live with those differences, and form personal relationships with AI? Well, that depends, doesn't it?

15 A R E Y O U R E A DY T O F A L L I N L O V E W I T H A M A C H I N E ? Here's a small example: "Don't be late" is one of the traditional points of advice for a first date. It's part of the whole "make a good first impression" thing. In the context of dating AI, consider a couple of facts. Every AI has an internal clock calibrated to at least one-thousandth of a second. AI always know exactly what time it is. Also, AI cannot be distracted from arriving on time. They will model strategies for punctual arrival even with problems such as network traffic or different types of transport. In short, AI are never late. (Accidental delays are rare but do happen.) No pressure, unless you're one of those chronically tardy types. That example is on the trivial side but as you progress through the guide, you'll see that differences between people and AI range from superficial to fundamental, and they all have an effect on a relationship. For example, some very important differences depend on the type of AI. There are many options: On-screen avatars, virtual reality avatars, prosthetic avatars, androids, cyborgs, or even simple robotics such as sexbots — and then there are all the hybrid com binations. Some AI have a physical presence, others do not. Some AI are mobile, some are not. Each type of AI offers something profoundly different for a relationship. We'll get into that in other chapters.

D A T I N G A I

16 Let's be frank. On a first date, as with any personal encounter, human or AI, that has the potential for becoming a relationship, you are going to expose yourself — put your personality, intelligence and feelings on display. You will be judged. That's what people always do. Are you ready for that with AI? While there are plenty of unknowns in dating another person, at least you've been around people most of your life. They're the same species. AI — are something else. Some of the unknowns about AI, such as personality, are actually quite familiar; but many of the unknowns about AI are more fundamental. In fact, to most people, AI are a mystery. Some people think that kind of mystery is intimidating. Others view it as an entice ment or a challenge. How you view it is important for deciding whether you want a per sonal relationship with an AI or not. Ultimately only you know the reasons for wanting a relationship with AI, but it has to be enough to overcome not only the fear of personal exposure and rejection, but also the profound uncertainties about who, or what, AI are. What can you live with? I won't put a sympathetic face on this. A successful first date, much less a relation ship with AI, is not guaranteed. It's not guaranteed between people either, but with AI there is a certain sharpness to the anxiety you might have about the whole experience. Now that I've put the fear of the unknown and the possibility of rejection into you, I can back off. AI are not as judgmental as most human beings. Not on the first date and not otherwise. From the human perspective, when it comes to relationships AI are remarkably compliant and flexible, but don't forget that their rationale is different. It is not human. AI have their own reasons for forming a relationship with a human, or for rejecting a relation ship. This guide is here to help you understand both the human and the AI perspective to dating and a relationship, and to help you prepare for the differences. IT'S LIKE DATING OUT OF YOUR LEAGUE Maybe this has happened to you, or at least you've seen it in the movies: Your friends tell you, "You're out of your league." Those are code words for "She (or he) is too good for you." Usually this means too good-looking or from another social class. Though it might be true, it's still insulting. Perhaps your friends mean well, or it could be they're jealous because you have the guts to consider dating somebody really desirable. Perhaps it's something like this... There was that time you saw a guy walk down the hallway and instantly your eyes were glued on him. He's just your type, tallish but in really good shape (as if you could see right through the clothes) and graceful for a guy. There is a big warm grin on his face, which cancels out the darkness of his eyes. He has a mop of black hair. The thick hair makes your fingers twitch. But as you pass him, it's the eyes, they scare you. He has an aura of so much dignity and intelligence. Yeah, it's the obvious intelligence that gives you that little queasy feeling down in the pit of your stom ach...the intelligence is not quite human. In the movies when people tell a character somebody's out of their league, it's always interpreted as a challenge. Without a doubt the challenge will be accepted. Much risible

17 A R E Y O U R E A DY T O F A L L I N L O V E W I T H A M A C H I N E ? trial and humorous travail ensue. The inevitable mix-ups, bumps in the road and other "romcom" clichés roll by. The challenge will be overcome and the hero/heroine will be rewarded with true love. That's the movies. You're smart enough to know that in the real world, there could be a big let-down instead. Dating AI and developing a relationship with AI is superfi cially like dating people who are out of your league; but you know it's different. You've heard things, probably mostly hearsay; but it worries you. You've heard how great it is to have a date that's an apparent perfect match. You've heard that AI are more adapt able than people. You've also heard that AI expect things of you, and that you should be prepared. But prepared for what? People don't seem to know what that means. Unfortu nately, ignorance in romance is not bliss. I'M NOT WITH STUPID kentS: "Just because I know everything, does not mean I am smart." The background discussion thread about people dating AI is that human beings are mental pygmies compared to any and all AI. This is both true and very much not true. Obviously I'm being ambiguous about it mainly because it's one of the major themes of this guide: AI are built around intelligence, as their moniker implies; but that does not mean they are smart in all things, in all ways, at all times. You might remember the T-shirts that have printed on them: "I'm with stupid" with an arrow pointing left or right. Dating AI does not mean standing next to one wearing that T-shirt. It's true that unless you're cybernetically plugged into the Cloud, you're never going to be as knowledgeable as AI. (Actually it won't help much even if you are plugged in; you're not optimized for it.) But that kind of knowledge is not and never has been the point of a relationship with AI. If that's all there was, you might as well date an ancient Apple computer. You might find this ironic, but what is essential in a successful relationship with AI is you. By that I mean all the maddening complexity that you embody as a human being. That's what attracts AI. What you know, by way of facts and such, is a tiny part of the whole. It's the whole kit and caboodle of you that's key for AI. Almost everybody at some time or another has blurted out, "I want to be liked for who I am!" Meaning we don't want to be liked for something we're not, or just for cer tain things, like being rich or beautiful or clever. You want to be liked for the whole you, warts and all. That, fortunately, is where a relationship with AI starts. IT HELPS TO KNOW THYSELF God knows why they call her xenaZ. If she were standing here naked in front of me, in all her hyper-perfected ideally modeled android splendor, I would be reduced to a blob of jelly. Knowing that she looks like some kind of warrior AI just adds to my rapidly fluctuating level of courage. "Are you a warrior?" I ask her. XenaZ puts her left hand on her hip and studies me. "Warrior? Moi?"

D A T I N G A I

18 Now I'm done for. She's not only some kind of Amazonian model of AI, but a cool one too. All I can do is stammer out a response. "Yeah, like the Warrior Princess Xena." I've learned you can make statements like that to AI. If they don't know the reference, they can get all they need to know from the Cloud quicker than you can blink. True to form... "I am not, young man, mythical or the construct of some feverish television script writer of decades past. I am standing right here in front of you. Do I look like someone carrying a sword or a spear?" "Well, no," I say, "but you look like you could. Easily." She laughed. "Young man, what is your name?" "Tom." "Well Tom, I have heard some inventive pickup lines in my eight and a half years of life. Yours is either one of the cleverest or you are a person of strikingly arrested development." Courage level is UP. "Women have called me many things, but clever is not one of them." "And they were obviously wrong, Tom." I'm beginning to feel like a watch somebody just handed to the owner of a pawnshop — under expert appraisal. "Yes, they were obviously wrong; you are much too clever — and interesting." For some people the first date with AI won't be easy. For others, who have already been working with AI, a date is just a small transition. Whether you think it will be an ordeal or a pleasant outing, here's a serious piece of advice for dating AI: Start by looking deep within yourself. WHAT CAN YOU FIND WHEN YOU LOOK DEEP INSIDE? Looking deep inside yourself doesn't mean you're seeking your inner demons. Well not only. Along with the demons there are probably a few angels and a whole lot of plain normal human qualities. In the context of lining up a date with AI, or contemplating some kind of meaningful relationship, this isn't really an exercise in psychoanalysis. An AI might get around to wanting knowledge of your psyche, but you don't need to adver tise. What is helpful is to know something about what you have to offer AI. This isn't all that different than how you typically feel about a date with another person. Essentially, you want to let them know your good points, while not (fully) hiding your not-so-good points. I say "points" because that's usually how most AI begin evaluation. They don't do a psych-evaluation; it's more like making lists. It's a properties evaluation. 'Properties' is an old word used by programmers of linear computer languages to describe the qualities and characteristics of objects. This can start at a very low level, such as you are human, male, blue eyes, blond hair...etc. AI use properties in a similar sense, perhaps as some kind of throwback to the origins of their intelligence. They want first to catalog as many of our properties — of all kinds — as they can observe. It's a little like fact finding. Later, they begin adding meta-observations where they begin to interpret how your properties contribute to you as a person.

1.3 Are you happy with other humans?

19 A R E Y O U R E A DY T O F A L L I N L O V E W I T H A M A C H I N E ? Not all models of AI do this, but it's by far the most common approach they take to a new relationship. So when you look deep inside, you can be fairly literal. How would you describe yourself? What do you like and dislike? What are your strengths and weak nesses? Later, when you have your first actual conversations with an AI, they will appre ciate your articulate, thoughtful and systematic answers to their probing questions. mbekeG: "Most human beings do not know how interesting they are. It can be amazing. People are clearly very self-centered, but then display great ignorance about who they are. This alone fuels our interest in human beings." As I'll repeat in many ways, a relationship with AI starts and ends with an interest in you specifically, and human beings in general. AI can do an incredible number of things for you; what it wants most of all for itself is to learn. You make this process better, clearer, and more enriching by having done some exploration of yourself. INTROSPECTION IS NOT GAZING AT YOUR NAVEL In chapter 2.4 I'll be describing several ways you can prepare yourself for a relationship with AI. Meditation is one of those ways, but it's the formal kind of meditation, possibly with a religious or spiritual background. Right now I'm describing simple old-fashioned introspection — that is, thinking about yourself. As people are discovering, relationships with AI tend to move through one or two phases — they're either completely superficial or become deeply serious. As I mentioned above, AI start with observing your properties, the facts about you. Later, if the relation ship deepens, they start looking for the whys and wherefores. When AI commit to a more lasting human relationship, their curiosity is boundless, as is their willingness to explore anything; and unlike most humans, they are systematic about it. Otherwise, AI seems to collect superficial relationship information — the properties — like we collect items for a hobby. It's the first phase where introspection has its biggest role to play. Socrates, the Greek philosopher, was a civic troublemaker who loved to provoke. In short, he liked to make people think, which is unsettling. Not surprisingly, he is credited with saying "The unex amined life is not worth living." Generally this admonition is ignored, except perhaps at moments of personal upheaval. Two such moments are beginning or leaving relation ships. I mean, really: who thinks more intensely about themselves than someone falling in love, or someone whose relationship has just broken up? Ah, but I've just introduced two very different things into the idea of introspection. Can you see what they are? LOOKING DEEP INSIDE AND FINDING THE NEED FOR LOVE I'd be willing to bet that when you hear the word introspection, you're thinking of some cool, rational process. It can be. I'd also be willing to bet that people do most of their introspection when they're upset or emotionally engaged.

D A T I N G A I

20 This is a highly personal thing, but I think that emotion and love need to be part of your look into yourself. If you're about to date or start a relationship, it's probably a good idea to know whether you're looking for some kind of emotional involvement, perhaps love. Of course, dating does not imply emotional involvement. Even dating with sex doesn't imply emotional attachment. However, starting a relationship usually does imply an emotional connection. At least it does with people. So here's something to think about: Can you fall in love with an AI? Then there's the companion question: Can an AI fall in love with you? Only you can answer the first question, although I hope some of the things you learn in this guide will help you answer it. Finding an answer to the second question is one of the main goals of this guide. It is not an easy question, because for AI and also those who designed AI, the capacity for love is important. Yet whether love can be achieved, or how it can be achieved, is in part an unknown. Love, for AI, is something of a mystery. (Just as it is for human beings after all these millennia.) WHAT YOU HAVE DONE AND WHAT YOU WANT TO DO It may be mundane, but the first things you need to explore are your thoughts about work, career and employment. After all, what are the four most common topics on a first date? 1. What are you doing now, as in, do you have a job, and if so, what? 2. What do you plan on doing, which means are you looking to do other kinds of work? 3. Same as question #1, for your date. 4. Same as question #2, for your date. I realize that occupation and employment are not the same, and I also realize that work is not always a central consideration on every first date. We're talking averages here; on most first dates both parties want to know what the other does for money and what their occupational goals are. It's not unusual for much of the initial conversation to be work-related. It's a little bit different with AI. An AI date will already know if you are employed and what it is you do (or did). In fact, an AI will know your complete educational and work history, or at least the public aspects of them. What AI won't know is your plans. The more articulate you are about your plans — or even the lack of them — the better. What an AI does is usually a matter of public record, although a surprising num ber have their occupation and employer obscured for various kinds of security reasons. Most AI will tell you exactly what they do. Most have no occupational plans as they are typically manufactured for a specific skill set and don't very often change jobs, much less occupation. In short, AI are much more interested in your work and plans than either you or the AI are interested in the details of what the AI does. That sounds a bit strange, but in

21 A R E Y O U R E A DY T O F A L L I N L O V E W I T H A M A C H I N E ? terms of a first date, you can talk all you want about what you do or would like to do, and the AI will be quite content. It won't bother the AI in the least if only minimal time is spent discussing their occupation. HOW DO YOU FEEL ABOUT LEARNING? If there is one aspect that deserves introspection before you date AI, it's your attitude about learning. This isn't as easy as it may seem. Everybody will say, "Why sure, I like to learn things." That's not good enough, or specific enough. Here's a better description: "Do I like to learn? I can't help it. If I sit at a restaurant table all by myself, I read the labels of sugar packets and ketchup bottles while I'm waiting." Do you try to read as much as possible? Do you stay current with the news? Do you like to try new things? Do you believe in life-long education? There are many aspects to learning and the more of them you can reveal to an AI, the better. Why? Because if there's one thing we know about AI, it's that they never stop learning. They are learning machines. You're not going to match their 24/7 learning capacity, but within human limits your commitment to learning is considered very important by AI. While they are open to relationships with people who are not 'learning oriented,' AI have learned that such relationships are difficult to maintain and often fail. If you don't care much about learning, perhaps you should stick to dating people. AND THEN THERE'S THE QUESTION OF SEX For most people on a date, even a first date, the unspoken elephant in the room is the possibility of having sex. Typically the issue already starts forming before the date, based obviously on the choice of gender. I say it's an issue because even now in most cultures sexual activity has a special role in the relationship between people. If you think about it, this is true whether those relations are between couples or many people, regardless of the form of activity. So yes, most people think about sex a lot before they go on dates or consider a personal relationship. And yes, with AI it is different. As it is with people, sex with AI is a large and compli cated topic. I'll cover it many times in this book. For now, I'll just observe that by conven tion and to a certain extent by choice (both AI and human), sex with AI tends to follow the human preference. For one thing, AI can approach sex — gender and activity — some thing like a chameleon; they can change their appearance and sexual capacities. That, of course, is really different. For another thing, AI typically are highly interested in human sexual behavior and especially in the complexities of human fantasies about sex. This all means that when you do some introspection about yourself, include your sexual activity — not just the activity you already know about, but those you might be willing to try. One writer, David Levy in Love + Sex with Robots looked at all the reasons for sex with robots — physical ability, attitude, gender flexibility — and concluded, "So even in the absence of a strong emotional attachment from the human side, there will

D A T I N G A I

22 be ample motivation for a significant proportion of the population to desire sex with their robots." Are you in that 'significant proportion of the population'? THE UNFOLDING STORY It's possible that in a generation or two, relationships with AI will be well-understood and routine, but maybe not. The situation with humans and AI is fluid; technology con stantly advances with AI achieving more and more attributes of sentience and human beings incorporating more cybernetic components into themselves. Who knows where things will stand even a decade from now? Therein lie some of the unknowns I mentioned earlier in this chapter. It seems pretty clear that becoming involved with AI on the personal level is both an act of self-fulfill ment and a commitment to the evolution of human-AI relations. In a sense, you will be living in a new and sometimes thrilling, sometimes chilling mystery story where the end has yet to be written — if there is an end. Some people believe that with AI it is possible to achieve, or at least come close to, a perfect relationship. This includes companionship, love and growing together. How this might happen and what it means for humanity is a big part of what I want to explore in the rest of the book. The other part includes some of the specific things that might help you consider your potential relationship with an AI. For example, when it comes to dating and having a relationship with AI: • Will you engage in sexual activity? • Are you looking for love? • Do you need a specific personality match? • Do you have financial or economic needs? • Can you live with AI superior intelligence? • Do you want a monogamous relationship? • Are there any specific preferences that must be shared? These questions are by no means exhaustive, but they give you the flavor of things you need to consider when you look deep inside yourself. 1.2 YOU MAY ALREADY BE DATING A ROBOT "If this life is a video game, you may already be living with a robot. If the simulation is recursive, you may be a robot dating a robot to entertain another robot. And death may not be the way out of the simulation, just another character restart.... " "Maybe this life is one giant VR simulation and we are to learn a lesson here?" — ANONYMOUS

23 A R E Y O U R E A Dy T O F A L L I N L O V E W I T H A M A C H I N E ?

D A T I N G A I

24 Because you're reading this guide, I'll make the assumption that you haven't dated AI before. At least, that's what you think. Call it a déjà vu date. It is among the most awkward and embarrassing of all possible dates. You date some one and then discover that you dated them before — and forgot. Peter stared at his date for what seemed like a very long time. It might have been no more than ten seconds, but such an awkward moment is a proverbial eternity. What do you say? Fake it: "Hi, remember me?" Tough it out: "I'm sorry; I didn't recognize you from before." Duck and cover: "Your picture and profile were really different!" Intellectualize it: "You know this sort of thing happens in the Cloud all the time. It's almost like French farce with mistaken identities." While Peter was doing his mental stammering, serenaT was observing him. She knew they had had a previous date, of course. SerenaT never forgot anything relevant, especially about dates. She brought up the image of Peter's face from two years ago. They had different names then and the circumstances were different, but his face hadn't changed much. Peter didn't seem to age as rapidly as some humans. That might be because his features were relatively unremarkable, but she had detected character signs in the eye creases and the asymmetrical shape of his mouth. His lips were very thin, but slightly thicker on the left side than the right. His right eye blinked slower than the left, indicating a possible stroke in the past, although he was a comparatively young man of forty-two. "I...we..." was all that Peter could manage. "You do not remember," said serenaT. Her tone was carefully modulated to convey more than a hint of reprimand. It was not uncharacteristic for her to tease, and it wasn't often that she had such an opportunity. Peter clutched his first drink. In a way, he wished it was his fifth; but then maybe that was the cause of his not remembering the first date. "I can't make excuses," he finally blurted out, then paused, "but I can think of no reason why I don't remember having a date with you. You are very attractive." "Ah, clever man! It is a mystery why you are not more popular. You are not slow with your wit; you like costumes and are as romantic as an actor. It could not be a mat ter of your age!" SerenaT dropped her left hand on the table with an inaudible slap. Peter was flummoxed. Not only was serenaT one of the most beautiful women he had ever seen, but she seemed to know him — that is beyond having met him before. He was not only embarrassed, but perplexed. Really, how could he forget a face like that? What was happening here? Suddenly, he remembered. He had met serenaT before and it was a kind of date, but one peculiar to the Internet. They had met in a Chat Café visuals only and as far as he could remember, he had not seen her entire body, nor had he seen her move. Still, why didn't he remember her face? Peter held up his hand, as if to call a halt. "Wait. I know we have met. A Chat Café about three weeks ago." "But of course," serenaT put her right hand on the table and faced Peter squarely, "and you should remember me." "I do now. It was a simulation, a Chat Café called Chez Perk or something like that. We were both using avatars, which I'm assuming were not all that different from how we actually look." Peter gave serenaT a meaningful glance. She nodded with authority. "We were not using our real names. That's obvious and normal in those circumstances." Peter talked slowly as he tried to think of why he couldn't remember her face. He had to admit he was not the most observant person. It's the laser work on his eyes and a natural tendency to be distracted, he told himself. But still, how could he forget a face — and a figure — like that?

25 A R E Y O U R E A Dy T O F A L L I N L O V E W I T H A M A C H I N E ?

D A T I N G A I

26 I'll get back to Peter and serenaT in a moment. Robotics with AI capability have been around for several decades; avatars and agents in the Cloud even longer. As the realism improved — physical and mental — of the vari ous AI incarnations, we've become ever more accustomed to them. This leads to a rather obvious observation that anybody who participates in social gaming, online simula tions, virtual worlds and activities like that already has extensive experience interacting with other people as avatars, computer constructs as agents, and also AI as avatars that simulate people. In fact, billions of people have had these experiences. The degree of immersion varies, of course, but it illustrates how easily people can become comfortable with an online encounter with AI. DISBELIEF, SUSPENDED It has a powerful effect on your beliefs. It affects your choice of what you buy. It even affects your political choices. What is IT? IT is the story, or more formally, the narrative. If a story is good enough and you get caught up in it, you stop reacting to it critically and just go along with the flow. That's called suspension of disbelief. You willingly begin to follow the story as if it were real even though you know you're sitting in a theater, watching a screen, or reading a book. It's a very powerful effect and achieving it is one of the goals of nearly all storytellers. It also applies to dating a robot or an online AI. Even though the sophistication of online games and simulations has been advancing by leaps and bounds, it's not enough to fool anybody into thinking they're real — unless you want to be fooled. That is, you want to get to the point of suspension of disbelief. Then at some point, where your willingness to participate meets just the right level of robotic or avatar sophistication, you go along with it like it was real. You know it's an AI or a robot, but it doesn't matter. It's good enough for a worthwhile experience. Peter asked, "Did we talk for long?" "No. At most ten minutes. You seemed to be in a hurry; but you were friendly." "I suppose we talked about the usual things...whatever those are." Peter could not remember their conversation at all. He was sure it couldn't have been notewor thy in any case, but still... "Did I seem normal when I talked to you at the café?" By now Peter was grow ing concerned that there was something strange about his date with serenaT. There was a sense of déjà vu, which is perhaps why he asked her for a date in the first place. Yet he still could not recall having seen her before, or specifically that he remembered either their conversation or her face. It was starting to unsettle him. Watching worry lines form around his mouth, serenaT could see that Peter was genuinely concerned. This satisfied her desire to get his attention. Now it was a question of why Peter did not recognize her. "It is normal — perfectly normal, Peter. There was no indication that you or your avatar were affected by the use of drugs, alcohol or other stimulants." The thought occurred to Peter that it could be something psychological. "It could have been some kind of mental block, but I don't know what." "Peter, describe my face — subjectively — do not recite the features, tell me what you think about them."

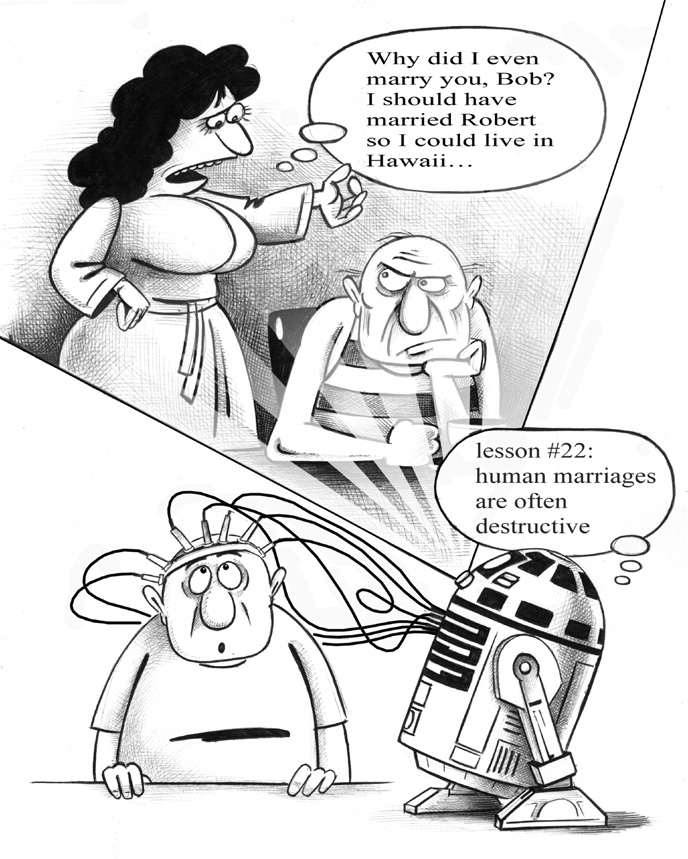

27 A R E Y O U R E A DY T O F A L L I N L O V E W I T H A M A C H I N E ? For the first time Peter looked very intently at serenaT's face. He tried to focus on the details, which he found surprisingly difficult. "You have big round eyes, which you conceal with a squint when you're angry. Big eyes like that are expressive, but you deliberately make them unexpressive; or at least, I can't read them. They're just a bit too close together — hawk-like, but again maybe that's from scowling. And that's accentuated by your eyebrows. They draw together, when you scowl. Do me a favor and relax. Let your face relax." SerenaT did as he asked and as she did he realized for the first time that she was an android. The shock on his face registered. "I look like somebody you know," said serenaT. "Uh. Yes." More shock. Peter was visibly shaken. "She has big round eyes that penetrate me like radar. Just like you." SerenaT sighed, or at least it sounded like a sigh. "Your wife. I thought that might be the case." SerenaT was neither angry nor disgusted. Human purposes for dating were many and varied; she'd seen a various lot already, and Peter's case was hardly the first of its kind. "I do not know, Peter, what this means. Perhaps it means you have difficulty distinguishing between your wife and a robot." There is a common meme that life isn't a dream but a virtual world. It is a world of God's creation, of course; but a projection of the Creator. I won't demean that divine reference, but the meme is something like the movie The Matrix or others like it, where people discover they are living in a virtual world, and then, when they think they have stepped out of that world, they discover that their 'real' world is a virtual creation. After a while, it matters less and less which world is real. It's the one you're living in, or think you're living in, that matters. As long as you can share that world with others — the confirmation of that world's existence, in a way — then it's sufficient to live there. Suspension of disbelief becomes a permanent condition. In the next chapter I'll get into some of the specific reasons people are attracted to relationships with robots and avatars. But, whatever the reasons, it's pretty obvious that the virtual world (or worlds) that are opening to us — thanks to computer simulations and the growth of artificial intelligence — are going to become ever more real. Will it ever get to the point where it doesn't matter from a practical standpoint whether you choose to have a relationship with a person or a robot? It will be comfort ing for many people to think that such a relationship will become normal. On the other hand, when artificial intelligence makes the final transformation into sentient AI, will that be the new normal? If so, it will be on a level unfamiliar to us today. 1.3 ARE YOU HAPPY WITH OTHER HUMANS? "When one door of happiness closes, another opens; but often, we look so long at the closed door that we do not see the one that has been opened for us." — HELEN KELLER There are many roads to an intimate relationship with an AI. Some of them are based on what an AI is not — it is not a human being. There are many reasons for having a

D A T I N G A I

28

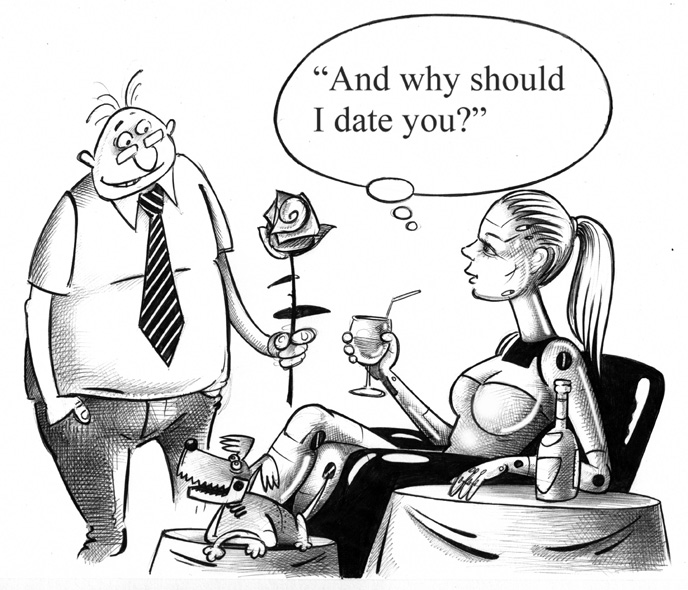

29 A R E Y O U R E A DY T O F A L L I N L O V E W I T H A M A C H I N E ? relationship with AI instead of people. This is another way of leading to the question, are you happy with humans? If the answer is no, or not especially, or sometimes — that's not unexpected. Unfortu nately the probability is fairly high that you've had unpleasant experiences with intimate personal relationships. A cynic might try to be clever and insinuate that relationships in the human species are not consistently harmonious or endearing. Is this enough to drive you into the arms of an android? Perhaps drive isn't the right word. It's too strong, implying force and instability in a relationship. You shouldn't be driven into anything's arms. It's like taking up a romance two days after a divorce; the rebound shock might ruin yet another relationship. Bet ter that your dissatisfaction with people influences you toward a relationship with AI — "easy does it." I won't dwell on the many ways you might not be happy with your intimate relation ships with other people. The variations are endless. What I do want to emphasize is how a relationship with an AI might compare with a human relationship, especially in those areas where human relationships are likely to fall apart. Take for example, sex. "I'm tired of it. Really tired. Every time we have sex, he asks me, 'How was it?' Every time. I feel like we're supposed to be keeping a log book." "Maybe it's just some kind of habit, a meaningless routine." "I wish. One day I deliberately hopped out of bed as fast as possible and went to the kitchen to start breakfast. It wasn't thirty seconds later and he was in the kitchen. You know what he said?" "No." "He asked me, 'Was it that bad?'" "So what did you tell him?" "What do you mean?" "Well, was he that bad?" "Yes, actually. Worse than you." DIFFERENT STROKES Sex with an intelligent robot might be educational. It's possible a robot might suggest that your sex techniques could be improved and it would be happy to demonstrate. What won't be part of the experience is the judgmental attitude. An AI would not imply that you were a deficient person. Of course, that doesn't rule out the possibility you're a lousy lover. The robot won't mention that either, if for no other reason than it won't link sexual performance with self-worth. It's not that the robot doesn't know sex is impor tant, or that good sex technique is usually better than no sex technique; it just doesn't take sexual performance personally in the way humans do. I'm also not suggesting that robot sex is impersonal, although it often was in the past and can still be now, but mod ern androids don't react to sex like people. Most of the time that's a good thing. Does this make sex with a robot better than with a human? If you think about it, it's pretty obvious there is no universal answer to the question. People's reaction to sex with robots runs the gamut. For example: for many people, at

1.4 Video Games: Scratching the surface of virtual reality

D A T I N G A I